This is article is part of a bigger series, in where I detail the entire architecture. For now, I’m going to focus on working with Azure Cosmos DB data within a Logic App. I’ll show you how I query the data for specific values, loop through the results, and update the data. This is one of my first “real” projects with Cosmos DB, so there may be a few pieces I’m not doing in the most efficient manner, but hopefully you’ll get the idea. So, let’s check it out!

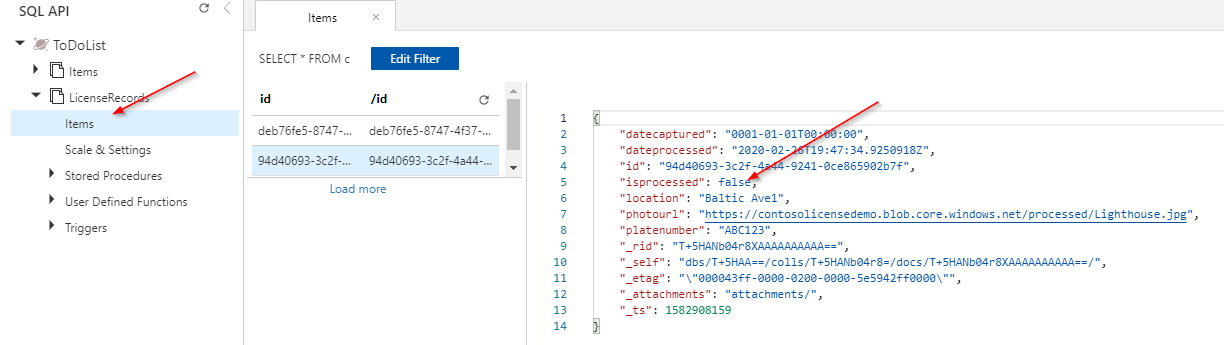

In this demo, I’m going to be updating Cosmos DB documents, using a Logic App. I’ll cover a lot more about this data in other articles, but I wanted to show you what I’m working with as a start. For my demo, I’m working with License Plate details, including a plate number, date, location, and an iisprocessed field. This filed determines if the plate information has been processed by my code.

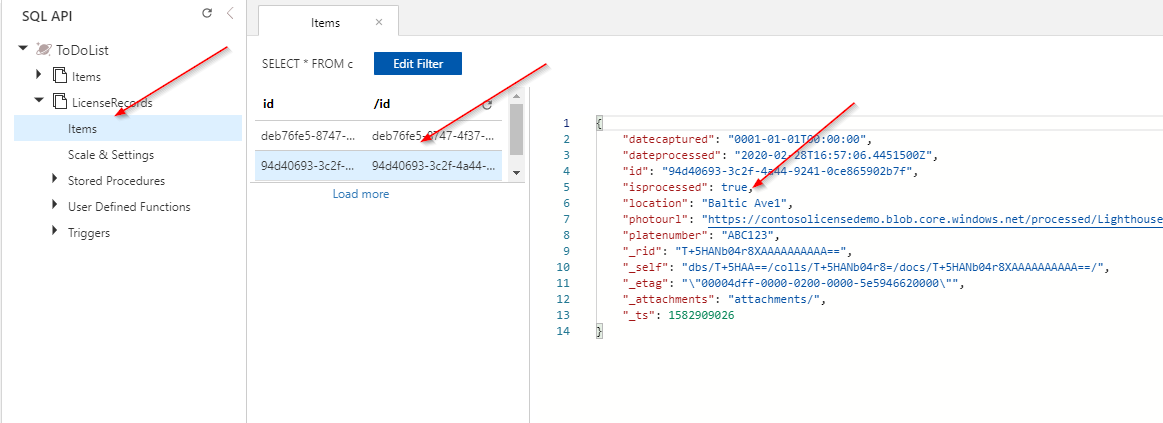

Here’s a view of some sample data. You can see that the isprocessed value is currently false. This will come into play during the demo, as it signifies what records I want to process with my Logic App. Exhilarating, I know.

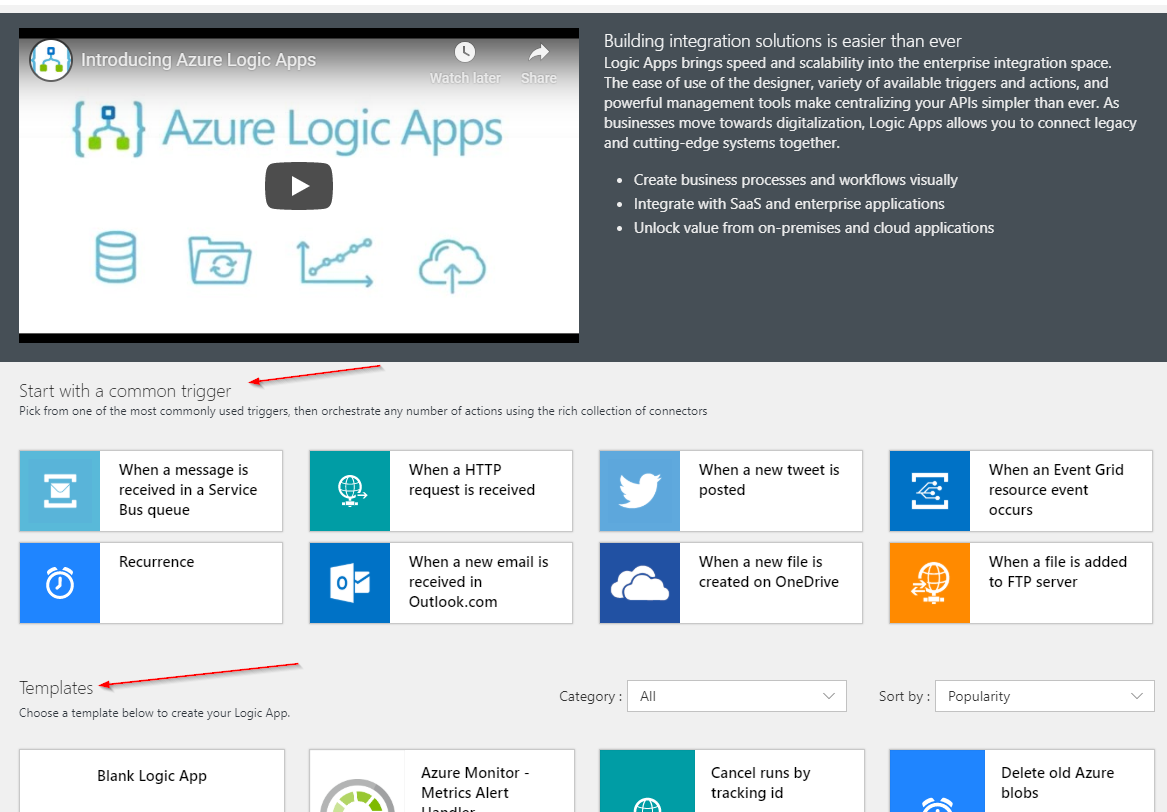

To get started, I create an Azure Logic App to work with. If you haven’t developed with this service yet, you’re missing out! Logic Apps provide a tremendous amount of functionality, with little/no-code to implement. By using connectors to other systems, you can quickly execute Azure Functions, send emails, update data, and update your Twitter profile. And you can do it in a matter of minutes!

When creating a Logic App, you can choose one of the many templates, or start from scratch. The templates offer some pre-built workflows, allowing integration with OneDrive, SharePoint, and many other components.

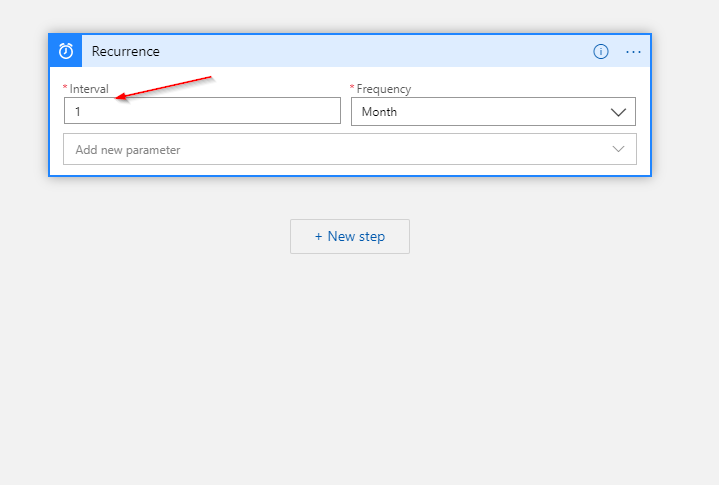

In my case, I start from scratch, choosing the Recurrence trigger, meaning my Logic App will execute once a month.

You’ll need to decide how you want your Logic App to execute (At a set time every minute/hour/day/week/month? On demand? Etc.). This is mega-important, as you will be charged for each time your Logic App executes. Make sure you know what to expect!

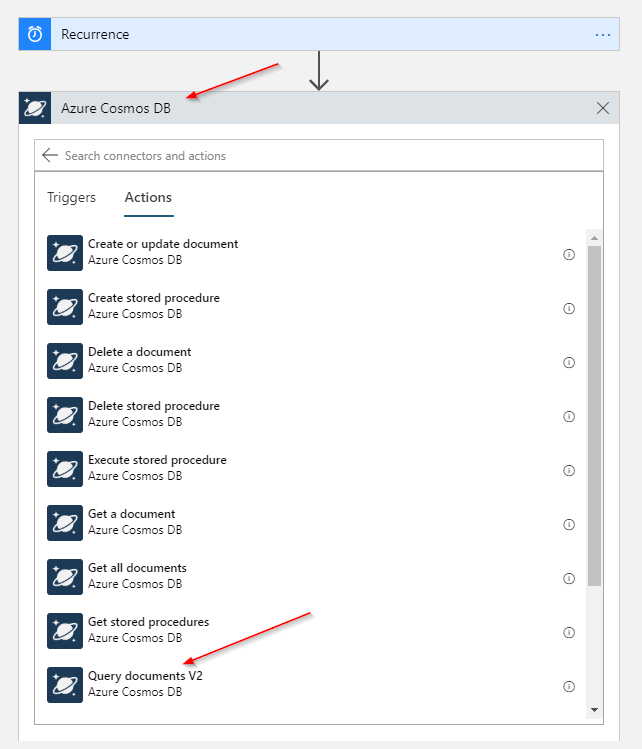

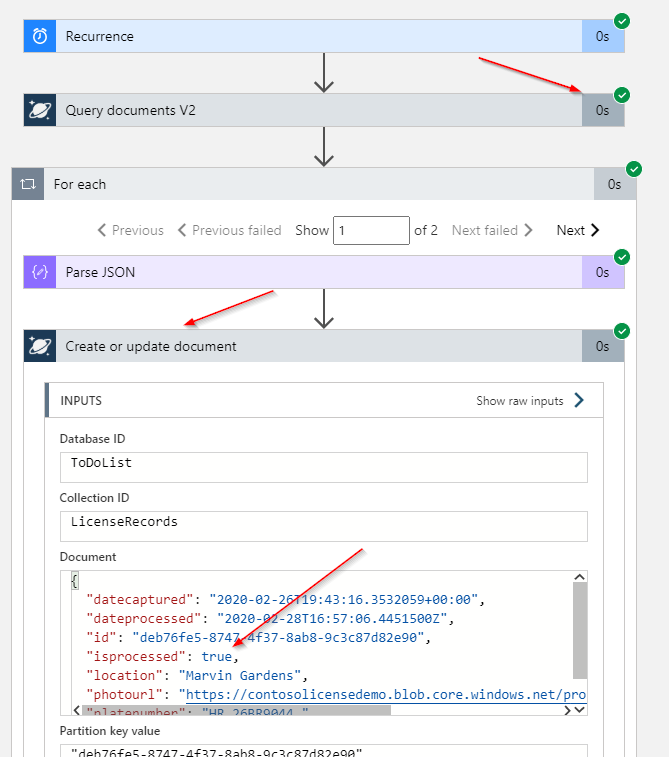

The next step is to get my Cosmos DB data. To do this, I use the Azure Cosmos DB connector. This built-in connector provides the ability for many common tasks developers need when working with their data. For my workflow, I select the Query documents V2 task, which will allow me pull data matching my criteria.

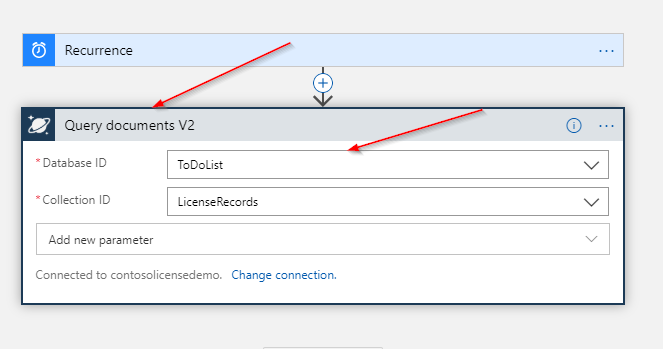

When you choose the Cosmos DB connector, I need to define the Database and Collection you will be querying.

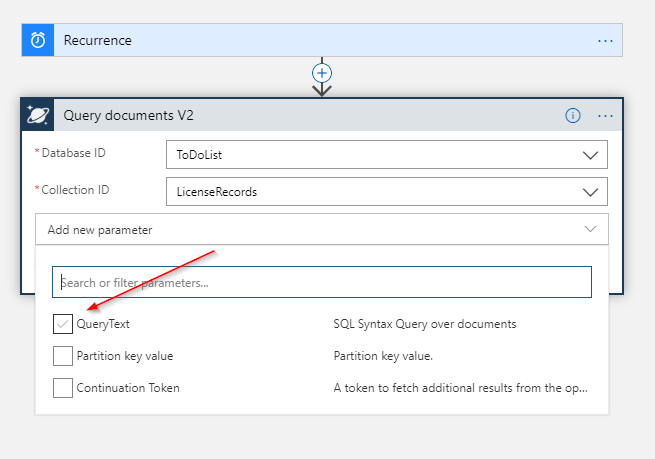

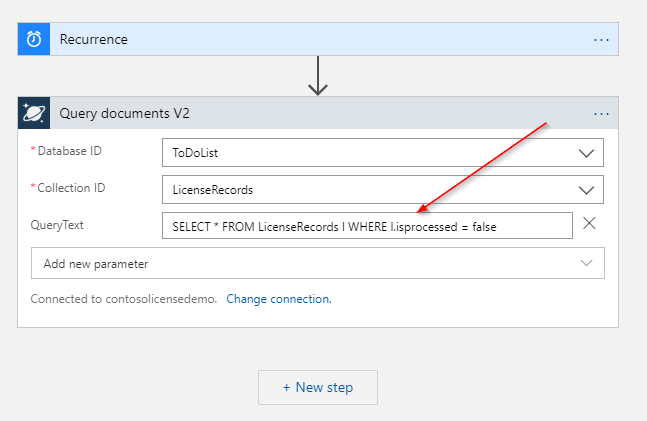

I then add the QueryText parameter, which allows me to specify my query.

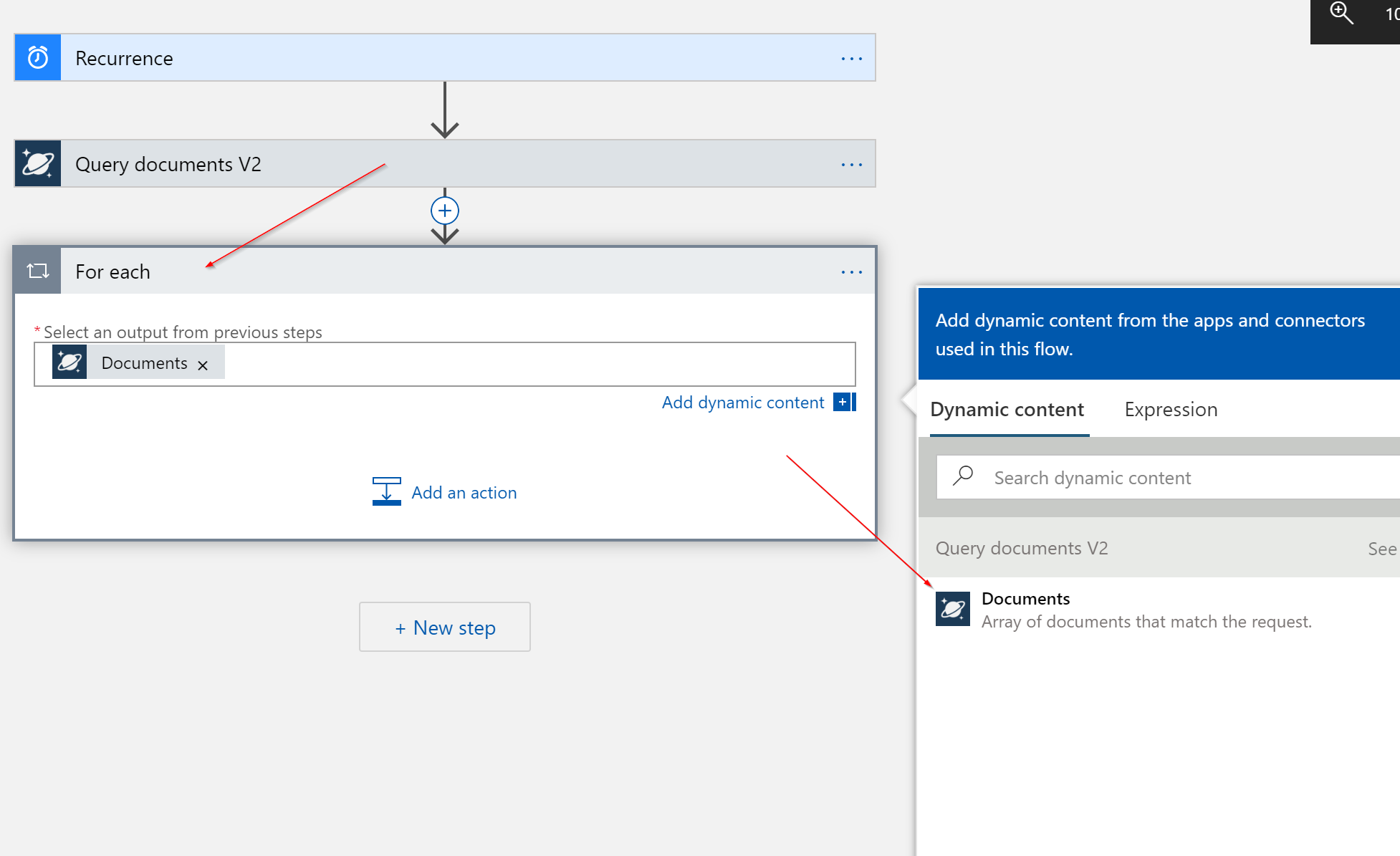

Once I have the data I’m looking for, I can loop through the documents and perform some updates. To do this, I select a new For each control, specifying the Documents output from the Query documents V2 step.

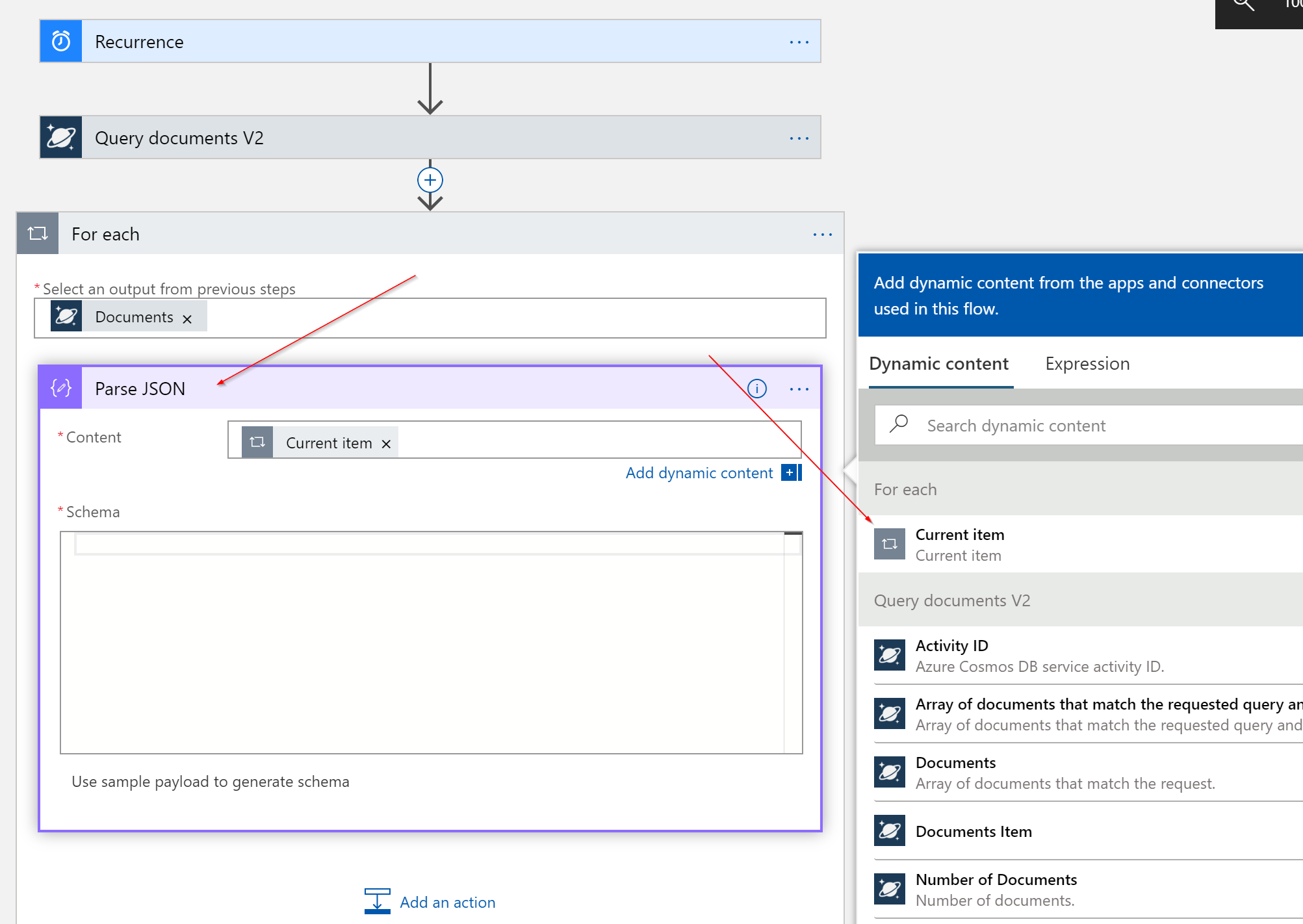

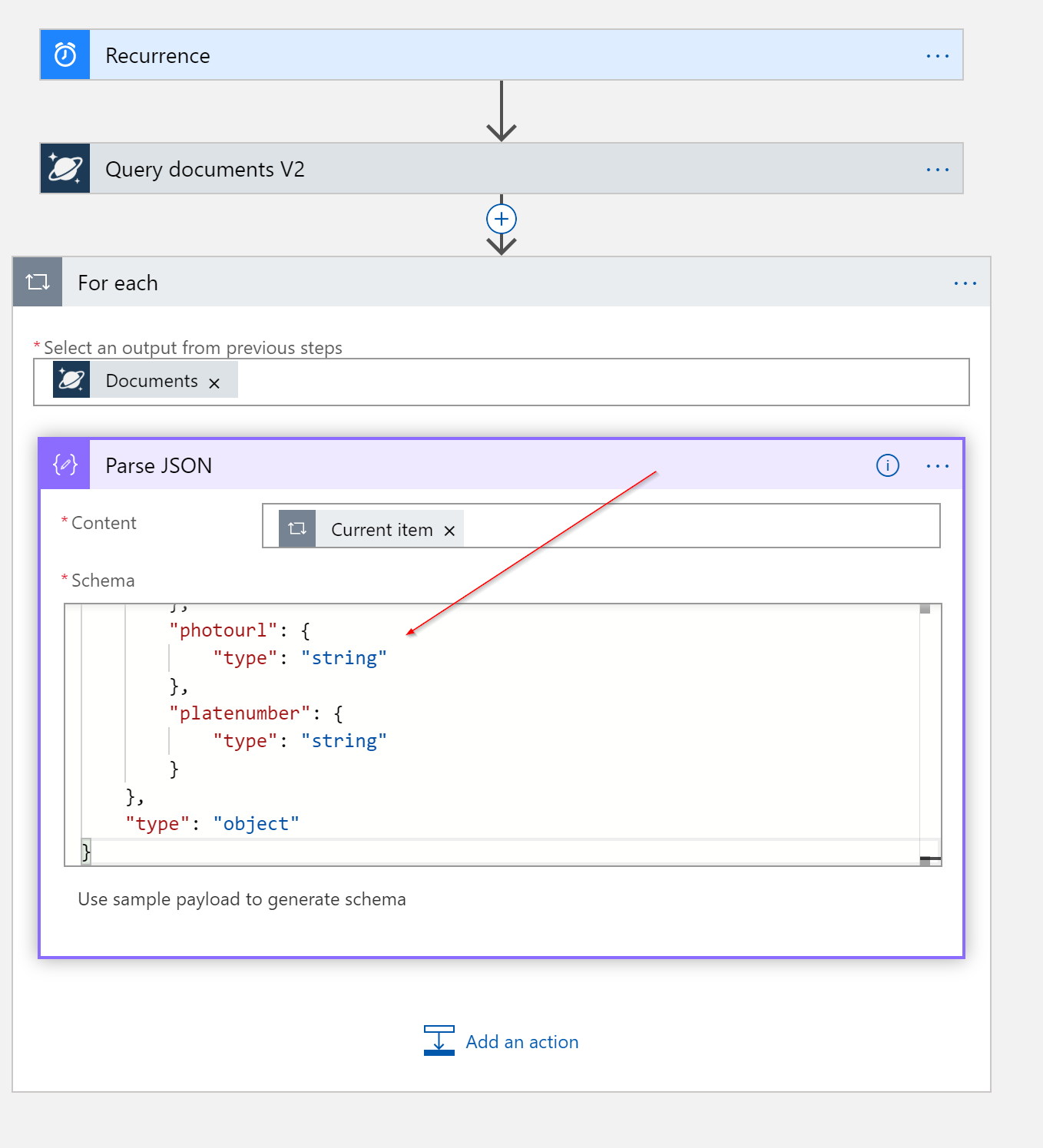

For each document returned in my query, I want to update the values and save the document. Because Cosmos DB is all JSON-a-fied, I need to parse the data to work with. To do this, I select the Parse JSON data operation (another built-in component) to work with the values. I select the Current item output value form the For each control.

Often with Logic Apps, your life will be much easier if you get the schema of the JSON. This allows the Logic App to understand your structure and for you to be able to reference nodes by name. In my app, I add the schema for my Cosmos DB data.

If you have a sample output of the data, you can paste that in the Logic App and it will determine the schema automatically. If I were to execute this Logic App, I could grab the output of the For each control and use that sample output.

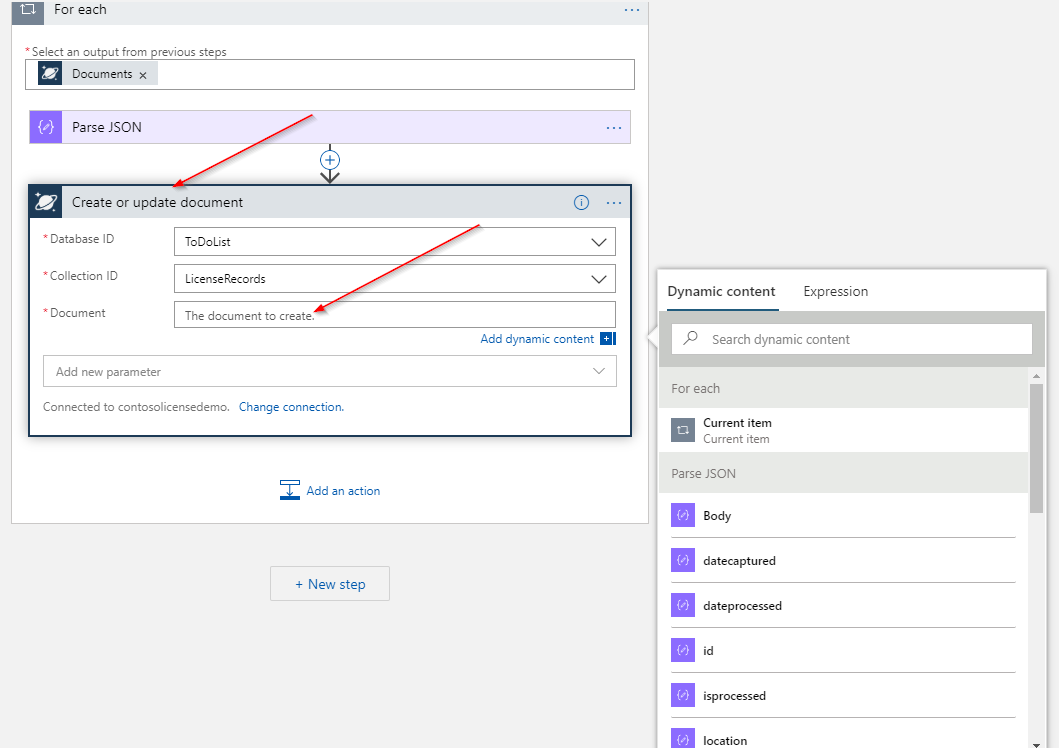

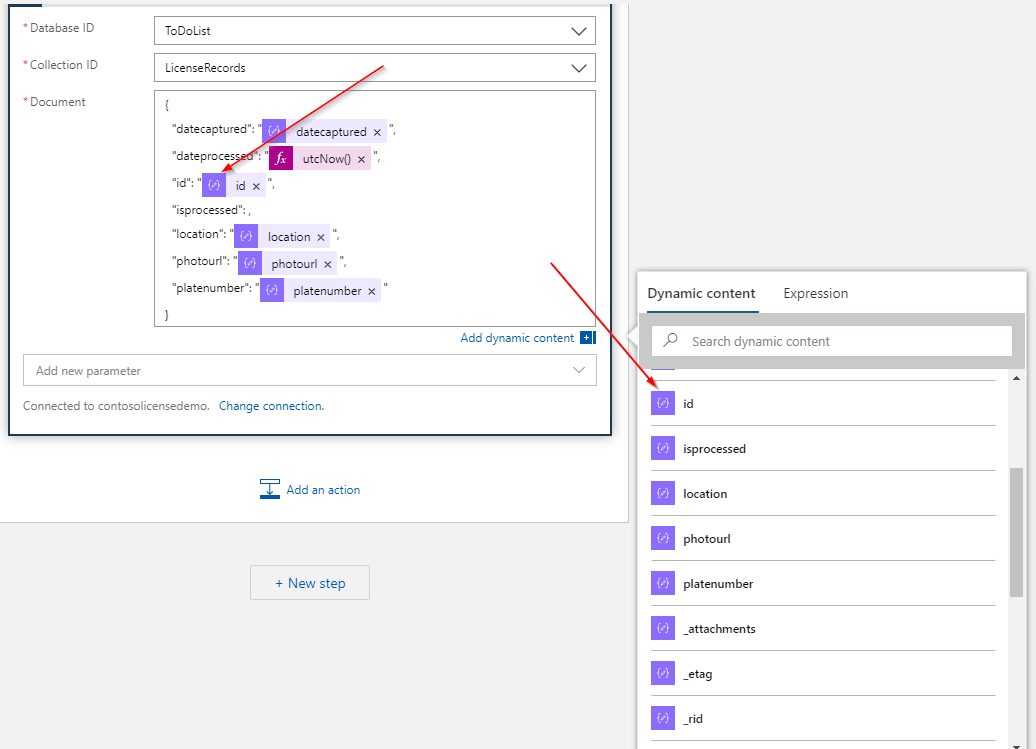

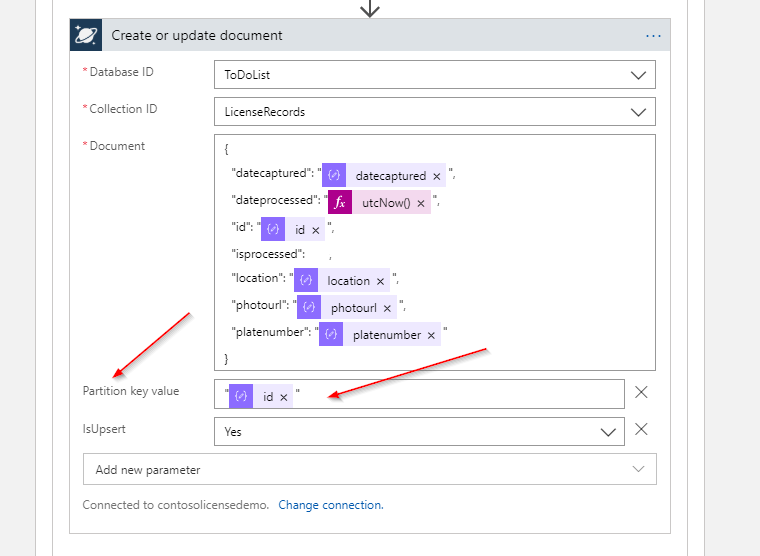

At this point, I know the current document I’m working with, and the Logic App knows the structure. I’m now ready to update my document within Cosmos DB. To do this, I once again select the Cosmos DB connector and select the Create or update document task. This task allows me to define the JSON for a document.

I create the JSON structure with my field names and use the Dynamic content values to pull in the Current item values.

I select the Partition key value and IsUpsert parameters. This allows me to specify the Current Item’s id value for the Partition Key Value, as well as allow the task to replace the existing document, if needed.

Note the quotes around the Partition key value, as this is a string.

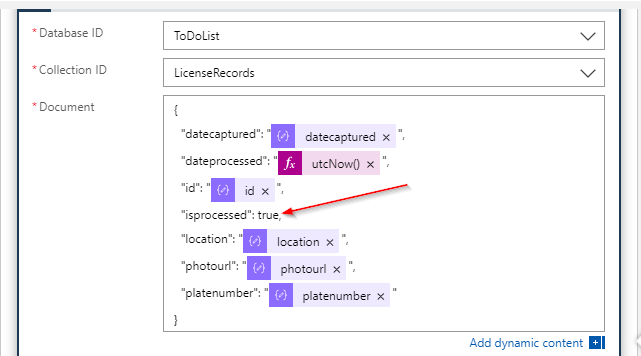

Lastly, I edit the isporcessed value to true, to update the record.

This will send the document JSON to Azure Cosmos DB for the update.

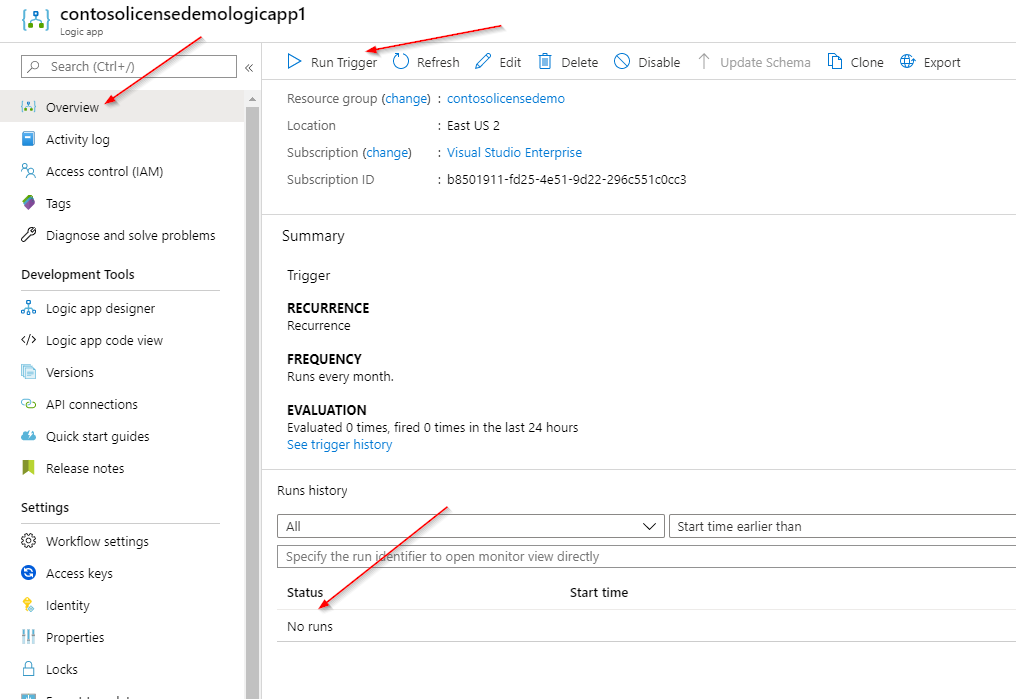

With my Logic App configured, I’m ready for testing. I could wait for the Logic App to execute or run it manually. On the Overview tab, I select Run Trigger.

After the execution, I view the results for the run. I can see by the check marks that everything completed successfully (Yay!). If things didn’t go smoothly, I’d see nasty red x’s, indicating a problem. I also confirm that the isprocessed field is set to true.

Finally, I check my Cosmos DB data to confirm the documents were updated.

In just a few clicks, I was able to create a fully-functional workflow to update my Azure Cosmos DB Data on a scheduled basis. By leveraging the built-in connectors and utilities, I can easily query and manipulate data. If you find that the built-in connectors don’t do what you need, build your own! You can quickly integrate nearly any system into Logic Apps for seamless integration and automation. Good luck!

This article is part of a larger series, all focused around a very cool architecture I built for processing license plates. You can check out the full code and other articles on my GitHub. Enjoy!

View Azure Logic App Template on GitHub

View the full project on GitHub

Azure Logic Apps Documentation